| Ryutarou Ohbuchi, Ph. D Graduate School of Engineering, University of Yamanashi, Yamanashi, Japan 大渕 竜太郎 (特任教授) Ryutarou Ohbuchi (Specially Appointed Professor) |

|

| Ryutarou Ohbuchi, Ph. D Graduate School of Engineering, University of Yamanashi, Yamanashi, Japan 大渕 竜太郎 (特任教授) Ryutarou Ohbuchi (Specially Appointed Professor) |

|

Ryutarou Ohbuchi

Specially Appointed Professor

Computer Science Department, University of Yamanashi

I teach at Computer Science and Engineering Department (コンピュータ理工学科) of the faculty of engineering, University of Yamanashi, located in Kofu-city, Yamanashi Prefecture, Japan.

My publication list and resume

Find me in,

,

2023年度 (fiscal 2023)

This paper proposes a novel approach for unsupervised learning of feature space suited for retrieval, not for classification.

刈込 喜大, 古屋 貴彦, 大渕 竜太郎, 自己注意機構を用いた3次元点群の回転不変な解析, 画像電子学会誌,52(4),2023.(オープンアクセス)(PDF)

Yoshihiro KARIKOMI, Takahiko FURUYA, Ryutarou OHBUCHI, Rotation-Invariant Analysis of 3D Point Set Using Self-Attention, The Journal of the Institute of Image Electronics Engineers of Japan Vol.52 No.4, 2023, (Open Access) (PDF)

2022年度 (fiscal 2022)

This paper proposes a novel approach for unsupervised learning of feature space suited for retrieval, not for classification.

T. Furuya and R. Ohbuchi, "DeepDiffusion: Unsupervised Learning of Retrieval-Adapted Representations via Diffusion-Based Ranking on Latent Feature Manifold," in IEEE Access, vol. 10, pp. 116287-116301, 2022, doi: 10.1109/ACCESS.2022.3218909. (Open Access)

This paper proposes a novel approach to 3D point set reconstruction. It projects patches of planer points onto higher dimensional space to give them larger degrees of freedom so as to reconstruct higher quality 3D shape having geometrical details..

T Furuya, W Liu, R Ohbuchi, Z Kuang, "Hyperplane patch mixing-and-folding decoder and weighted chamfer distance loss for 3D point set reconstruction", The Visual Computer, 1-18 (2022) https://doi.org/10.1007/s00371-022-02652-6 (Open Access)

2020年度 (fiscal 2020)

This paper proposes scale adaptive feature pyramid networks (SAFPNs) for object detection in 2D images. The SAFPN employs weights chosen adaptively to each input image in fusing feature maps of the bottom-up pyramid and top-down pyramid. Scale adaptive weights for the fusion are computed by using a scale attention module built into the feature map fusion computation.

Lifei He, Ming Jiang, Ryutarou Ohbuchi, Takahiko Furuya, Min Zhang, and Pengfei Li, Scale Adaptive Feature Pyramid Networks for 2D Object Detection, Scientific Programming, Volume 2020, Article ID 8839979, doi:10.1155/2020/8839979. (Open access).

We propose and evaluate a novel object detection architecture called Cascaded Multi-Channel Feature Pyramid Network, or CM-FPN. The proposed network, which is based on Feature Pyramid Network by Lin et al., employs multi-stage cascaded top-down feature pyramid networks to extract more semantic multiresolution feature maps for object region proposal and object classification.

Lifei He, Ryutarou Ohbuchi, Ming Jiang, Takahiko Furuya, Min Zhang, Cascaded Multi-Channel Feature Fusion for Object Detection, in Proc. ICCCV'20: 2020 the 3rd International Conference on Control and Computer Vision, August 2020, Pages 11–16, doi.org/10.1145/3425577.3425580 (proof PDF)

This paper presents a rotation invariant 3D point set segmentation algorithm. The algorithm employs multi-resolution graph convolutional neural netowork for scale-aware and accurate segmentation of point sets. Input to the neural network is a rotation-invariant hand-crafted local 3D shape feature for 3D point sets.

T. Furuya, X. Hang, R. Ohbuchi and J. Yao, "Convolution on Rotation-Invariant and Multi-Scale Feature Graph for 3D Point Set Segmentation," in IEEE Access, vol. 8, pp. 140250-140260, 2020, doi: 10.1109/ACCESS.2020.3012613 (Open access).

This paper presents a novel unsupervised deep representation learning algorithm for 3D shape feature. The approach employs transcoding across multiple shape representations, e.g., across polygonal meshe, point set, and voxel representation

Takahiko Furuya, Ryutarou Ohbuchi, Transcoding across 3D Shape Rep-resentations for Unsupervised Learning of 3D Shape Feature,Pattern Recognition Letters(2020), (Published online July 11, 2020) DOI:https://doi.org/10.1016/j.patrec.2020.07.01 (Proof PDF)

On June 3, 2020, the following paper receibed "Outstanding paper award" from Information Processing Society of Japan. It is awarded to 6 papers out of 563 papers published during the period October 2018~September 2019.

上西 和樹, 古屋 貴彦, 大渕 竜太郎, 敵対的生成ネットワークを用いた3次元点群形状特徴量の教師なし学習,情報処理学会論文誌, 第60巻,第7号, pp1315-1324, (PDF)

(受賞: 2020年6月3日,情報処理学会より 情報処理学会論文賞を受賞.2018年10月~2019年9月に発表された563論文の中から6編が受賞.)

Kazuki Uenishi, Takahiko Furuya, Ryutarou Ohbuchi, Unsupervised Representation Learning for 3D Point Set by Using Generative Adversarial Neural Network, Journal of Information Processing Society of Japan, Volume 60, No.7, pp.1315-1324, 2019/07/15,

(ISSN 1882-7764),

(Permalink: http://id.nii.ac.jp/1001/00198263/), in Japanese. (PDF)

(Awarded: "Outstanding Paper Award",which is awarded to 6 papers out of 563 papers published in IPSJ journals during October 2018~September 2019. (IPSJ: Informatino Processing Society of Japan). )

2019年度 (fiscal 2019)

This paper presents a novel unsupervised deep representation learning algorithm for comparison among

sets, where each set consists of unordered features vectors.

S. Kuwabara, R. Ohbuchi and T. Furuya, "Query by Partially-Drawn Sketches for 3D Shape Retrieval," 2019 International Conference on Cyberworlds (CW), Kyoto, Japan, 2019, pp. 69-76.

doi: 10.1109/CW.2019.00020 (PDF)

This paper presents a novel unsupervised deep representation learning algorithm for comparison among

sets, where each set consists of unordered features vectors.

Takahiko Furuya, Ryutarou Ohbuchi, Feature set aggregator: unsupervised representation learning of sets for their comparison, Multimedia Tools and Applications, 78, 35157–35178 (2019), DOI: 10.1007/s11042-019-08078-y (PDF)

To search or to cluster 3D models based on shape, we need shape descriptor. This work proposes an unsupervised learning from unlabeled 3D shape models to train a DNN to extract such shape descriptors. We adopted adversarial learning as the approach.

上西 和樹, 古屋 貴彦, 大渕 竜太郎, 敵対的生成ネットワークを用いた3次元点群形状特徴量の教師なし学習,情報処理学会論文誌, 第60巻,第7号, pp1315-1324, (PDF)

(受賞: 情報処理学会より 情報処理学会論文賞を受賞.2018年10月~2019年9月に発表された563論文の中から6編が受賞.)

(受賞: 情報処理学会より 情報処理学会論文誌ジャーナル/JIP特選論文を受賞)

Kazuki Uenishi, Takahiko Furuya, Ryutarou Ohbuchi, Unsupervised Representation Learning for 3D Point Set by Using Generative Adversarial Neural Network, Journal of Information Processing Society of Japan, Volume 60, No.7, pp.1315-1324, 2019/07/15,

(ISSN 1882-7764),

(Permalink: http://id.nii.ac.jp/1001/00198263/), in Japanese. (PDF)

(Awarded: "Outstanding Paper Award",which is awarded to 6 papers out of 563 papers published in IPSJ journals during October 2018~September 2019. (IPSJ: Informatino Processing Society of Japan). )

(Awarded: "Selected paper" among papers published in IPSJ journals (IPSJ: Informatino Processing Society of Japan). )

This paper proposes a method to add text label to the whole and parts of a 3D model by using deep feature embedding. To compare totally different data objects, that are, text word and 3D shape model, feature vectors from those heterogeneous data modalities are transformed by deep neural networks into feature vectors in a common feature embedding space.

Kouki Omata, Takahiko Furuya, Ryutarou Ohbuchi, Annotating 3D models and their parts via deep feature embedding, Proc. 2019 IEEE International Conference on Multimedia & Expo Workshops (ICMEW), Workshop on Cross-media Big Data Analysis for Semantic Knowledge Understanding, Shanghai, China, July 8-12, 2019, 8 pages, DOI: 10.1109/ICMEW.2019.00090 (PDF)

2018年度 (fiscal 2018)

To compare 2D sketches with 2D images, training set consisting of pairs of pictures and asketches are needed. However, such dataset having enough size, diversity, and balance is difficulty to come by. In this paper, a DNN called Photo2Sketch that converts images into their sketch-like counterparts are trained using generative-adversarial framework to generate a synthetic dataset.

Takahiko Furuya, Ryutarou Ohbuchi, Data augmentation via photo-to-sketch translation for sketch-based image retrieval, Proceedings Volume 11069, Tenth International Conference on Graphics and Image Processing (ICGIP 2018); 1106925 (May, 2019), https://doi.org/10.1117/12.

2524230

2017年度 (fiscal 2017)

Finding 3D models given their parts by knowing approximate inclusion relationships between the 3D model and their parts

Takahiko Furuya, Ryutarou Ohbuchi, Learning part-in-whole relation of 3D shapes for part-based 3D model retrieval, Computer Vision and Image Understanding, Elsevier, Volume 166, January 2018, Pages 102-114 (https://doi.org/10.1016/j.cviu.2017.11.007) (PDF)

In this work, we tried to improve space and time efficiency of DLAN algorithm [Furuya2016] by hashing its feature into binary domain. We succeeded in retaining retrieval accuracy while compressing the feature significantly

Takahiko Furuya, Ryutarou Ohbuchi, Deep semantic hashing of 3D geometric features for efficient 3D model retrieval, short paper, Proceedings of the Computer Graphics International Conference (CGI 2017), Article No. 8 , Yokohama, Japan, June 26-June 30, 2017. (DOI:10.1145/3095140.3095148) (PDF)

SHREC 2017: Large-Scale 3D Shape Retrieval from ShapeNet Core55

This track of annual Shape REtrieval Contest 2017 aimst at retrieving 3D shapes, queried by 3D shapes, from a large scale database. There are two tasks to this track. The first, called "Normal", retrieves from the database as is. The other, "Perturbed", retrieves from the database whose objects are randomly rotated. We won, hands down, the "Perturbed" task by using DLAN algorithm [Furuya2016] that combines Point-set based 3D geometrical feature POD [Furuya, 2015] with Deep Learning (3D Convolutional Neural Network). We ranked second~third in the "Normal" track.

Manolis Savva, Fisher Yu, Hao Su, Asako Kanezaki, Takahiko Furuya, Ryutarou Ohbuchi, Zhichao Zhou, Rui Yu, Song Bai, Xiang Bai, Masaki Aono, Atsushi Tatsuma, S. Thermos, A. Axenopoulos, G. Th. Papadopoulos, P. Daras, Xiao Deng, Zhouhui Lian, Bo Li, Henry Johan, Yijuan Lu, and Sanjeev Mk, Large-Scale 3D Shape Retrieval from ShapeNet Core55, Proc. Eurographics Symposium on 3D Object Retrieval, Lyon, France, April 23-24, 2017. (PDF)

(DOI:10.2312/3dor.20171050)

SHREC 2017: Deformable Shape Retrieval with Missing Parts

This track of annual Shape REtrieval Contest 2017 consists of two tasks, the "Range" challenge and "Holes" challenge. We entered the "Holes" challenge, and won (came in 1st!). The "Holes" challenge tries to retrieve deformed 3D shapes that are fragmented ("cracks" divides the 3D models into parts) and have missing parts (e.g., there are holes where fragments are missing). We used our DLAN algorithm [Furuya2016] algorithm, which is a hybrid of hand-crafted 3D shape feature with Deep Learning (3D Convolutional Neural Network). Instead of the POD feature, we used Localized Statistical Feature (LSF) [Ohkita, 2012] for its robustness against noise.

E. Rodolà, L. Cosmo, O. Litany, M. M. Bronstein, A. M. Bronstein, N. Audebert, A. Ben Hamza, A. Boulch, U. Castellani, M. N. Do, A-D. Duong, T. Furuya, A. Gasparetto, Y. Hong, J. Kim, B. Le Saux, R. Litman, M. Masoumi, G. Minello, H-D. Nguyen, V-T. Nguyen, R. Ohbuchi, V-K. Pham, T. V. Phan, M. Rezaei, A. Torsello, M-T. Tran, Q-T. Tran, B. Truong, L. Wan, and C. Zou , L, Deformable Shape Retrieval with Missing Parts, Proc. Eurographics Symposium on 3D Object Retrieval, Lyon, France, April 23-24, 2017. (PDF) (DOI:10.2312/3dor.20171057)

2016年度 (fiscal 2016)

軽量な局所 2 値特徴を用いた 3 次元形状の比較

本論文では,大規模3Dデータ解析の効率化を狙った,ボクセル表現向きの軽量な局所3D幾何特徴量を提案します.提案する3DBRIEFと3DORBは,ボクセルから2値特徴を直接抽出する局所3D幾何特徴量で,省メモリで,かつ,抽出と比較が高速です.具体的には,局所領域の向き揃えの後,1対のボクセル値の大小比較により局所領域内を記述する特徴量の1bitを決めます.これをN回繰り返すことでNビットの特徴量となります.このような特徴量は2D画像については知られていましたが,3D幾何特徴では初めてです.

This paper proposes a set of novel lightweight local 3D geometric features for efficient analysis of large-scale 3D data. Proposed features, 3DBRIEF and 3DORB, are binary local features for 3D voxels. They are fast to extract, and compact to store and compare. Each bit of the binary feature is computed very efficiently by comparing values of a pair of voxels.

松田隆広, 古屋貴彦, 大渕竜太郎, 軽量な局所 2 値特徴を用いた 3 次元形状の比較, 情報処理学会論文誌, 57(11), pp. 2456-2466, 2016/11/15. (Permalink: http://id.nii.ac.jp/1001/00175929/ )

軽量な局所 2 値特徴を用いた 3 次元形状の比較

描画スタイルに基づく画像検索のための,局所視覚特徴量と教師なし距離計量学習を組み合わせた手法を提案します.数千枚のイラスト画像を含むベンチマークで評価した結果,大域視覚特徴量と教師あり学習を用いる従来手法よりも,検索精度が向上しました.

This paper proposes an algorithm to compare illustrations based on their drawing styles. We use locak visual feature and unsupervised learning to produce accuracy better than the previous algorithm that used a set of gloval features and supervised learning.

古屋貴彦, 栗山繁, 大渕竜太郎, 教師なし距離計量学習を用いたイラスト描画スタイルの比較, 電子情報通信学会論文誌 D, Vol.J99-D, No.8, pp.709-717, 2016/08/01, (Online ISSN: 1881-0225)

Aggregating sparse binarized local features by summing for efficient 3D model retrieval

In this paper, we propose a novel deep neural network for 3DMR called Deep Local feature Aggregation Network (DLAN) that combines extraction of rotation-invariant 3D local features and their aggregation in a single deep architecture. The DLAN describes local 3D regions of a 3D model by using a set of 3D geometric features invariant to local rotation. The DLAN then aggregates the set of features into a (global) rotation-invariant and compact feature per 3D model. Experimental evaluation shows that the DLAN outperforms the existing deep learning-based 3DMR algorithms.

l, Deep Aggregation of Local 3D Geometric Features for 3D Model Retrieval, poster paper, Proc. British Machine Vision Conference (BMVC) 2016, September 19-22, York, UK. (2016) (Paper PDF. Extended abstract PDF). (Paper link at BMVA website)

Aggregating sparse binarized local features by summing for efficient 3D model retrieval

This paper presents two methods to aggregate local features accurately by using k-sparse autoencoder (kSA). First method uses trained kSA directly for encoding, jointly optimizing the codebook and feature encoding. The second method exploits reconstruction error of the kSA for encoding. Experimental evaluation of the methods by using multiple local features under 3D model retrieval setting has shown that the proposed algorithm performs equal or better than the previous feature aggregation methods.

Takahiko Furuya, Ryutarou Ohbuchi, Accurate Aggregation of Local Features by using K-sparse Autoencoder for 3D Model Retrieval, short paper, Proc. ACM International Conference on Multimedia Retrieval 2016 ( ICMR2016), June 6-9, New York, NY, USA. (2016) (PDF). (DOI: 10.1145/2911996.2912054)

Aggregating sparse binarized local features by summing for efficient 3D model retrieval

This paper presents a pair of method to aggeregate local features into a compact feature vector via binary encoding of each local feature. By using ahighly sparse binarized encoding, each encoded local feature is very compact. Aggregation of binary encoded feature can be done efficiently via simple summing.

Takahiko Furuya, Ryutarou Ohbuchi, Aggregating sparse binarized local features by summing for efficient 3D model retrieval, Proc. of the Second IEEE Int’l Conf. on Multimedia Big Data (BigMM 2016), Oral paper, April 20-22, 2016, Taipei, Taiwan, (2016) (PDF). (DOI:10.1109/BigMM.2016.32)

SHREC 2016 Track on Parital Shape Queries for 3D Object Retrieval

Ioannis Pratikakis, Michalis A. Savelonas, Fotis Arnaoutoglou, George Ioannakis, Anestis Koutsoudis, Theoharis Theoharis, Minh-Triet Tran, Vinh-Tiep Nguyen, V.-K. Pham, Hai-Dang Nguyen, Hoang-An Le, Ba-Huu Tran, Huu-Quan To, Minh-Bao Truong, Thuyen Van Phan, Minh-Duc Nguyen, Thanh-An Than, Cu-Khoi-Nguyen Mac, Minh N. Do, Anh-Duc Duong, Takahiko Furuya, Ryutarou Ohbuchi, Masaki Aono, Shoki Tashiro, David Pickup, Xianfang Sun, Paul L. Rosin, and Ralph R. Martin, Partial Shape Queries for 3D Object Retrieval, Proc. Eurographics Workshop on 3D Object Retrieval, May 8, 2016, Lisbon, Portugal (2016). (PDF) (DOI:10.2312/3dor.20161091)

2015年度 (fiscal 2015)

Diffusion-on-Manifold Aggregation of Local Features for Shape-based 3D Model Retrieval

Aggregation of loca features has a large impact on accuracy and cost of object recognition in or retrieval of images or 3D shape models. Classic bag-of-words or bag-of-features approach has been followed up by such methods as Vector of Locally Aggregarted Descriptor (VLAD), Fisher Vector (FV) coding, Super Vector (SV) coding, or Locality-constrained Linear Coding (LLC). We propose a new feature aggregation method called Diffusion-on-Manifold (DM) that exploits structure of potentially non-linear manifold of local features.

Our experimental evaluation using 3D model retrieval setting shows that DM often outperforms previous feature aggregation methods such as FV, SV, LLC, or VLAD.

We also propose a new local 3D geometrical feature called Position and Orientation Distribution (POD) used in the evaluation experiment.

Takahiko Furuya and Ryutarou Ohbuchi, Diffusion-on-Manifold Aggregation of Local Features for Shape-based 3D Model Retrieval,oral paper, Proc. ACM International Conference on Multimedia Information Retrieval (ICMR) 2015, Shanghai, China, June 23-26, 2015 (PDF).

An Unsupervised Approach for Comparing Styles of Illustrations

In this paper, we propose an unsupervised approach to achieve accurate and efficient stylistic comparison among illustrations. The proposed algorithm combines heterogeneous local visual features extracted densely. These features are aggregated into a feature vector per illustration prior to be treated with distance metric learning based on unsupervised dimension reduction for saliency and compactness.

Takahiko Furuya, Shigeru Kuriyama, and Ryutarou Ohbuchi, An Unsupervised Approach for Comparing Styles of Illustrations, oral paper, Proc. 13th International Workshopn on Content-Based Multimedia Indexing (CBMI) 2015, Prague, Czech Republic, June 10-12, 2015, (PDF).

Scalable Part-Based 3D Model Retrieval by using Randomized Sub-Volume Partitioning

This paper presents a scalable algorithm for part-based 3D model retrieval. Given a part based query, e.g., a 3D model of a jet engine, the system searches through a 3D model database, and retrieval 3D models that contains, as their subparts, shape(s) similar to the jet engine. The; algorithm employs RSVP, or Randomized Sub-Volume Partitioning, algorithm accelerated by using late-binding local feature aggregation. To accelerate the search through the large number of subvolumes (e.g., 1350 subvolumes per 3D model, and 50k 3D models per database), the algorithmcombines feature dimensionality reduction with hashing into compact binary codes.

Takahiko Furuya, Seiya Kurabe, and Ryutarou Ohbuchi, Randomized Sub-Volume Partitioning for Part-Based 3D Model Retrieval, pp.15-21, oral paper, Proc. Eurographics Workshop on 3D Object Retrieval (EG 3DOR) 2015, Zurich, Switzerland, May 2-3, 2015, DOI:10.2312/3dor.20151050 (PDF).

3D SHape REtrieval Contest (SHREC) 2015 results

There are 9 tracks for this year's 3D SHape REtrieval Contest (SHREC) 2015. We participated in the following two tracks, and finished 1st in both of the tracks!

Non-rigid 3D Shape Retrieval: 1st place

The task of the Non-rigid 3D Shape Retrieval track is to retrieve highly articulated and/or deformable 3D shape. The competition was tight, with many team achieving high accuracy as the evaluation results show. In the ende, of 11 teams participated, we finished 1st.

We combined our LSF 3D local statistical shape feature, aggregated by using Super Vector Coding, with (single-domain) unsupervised similarity metric learning by using Manifold Ranking by Zhou, et al.

Lian, Z.; Zhang, J.; Choi, S.; ElNaghy, H.; El-Sana, J.; Furuya, T.; Giachetti, A.; Guler, R. A.; Lai, L.; Li, C.; Li, H.; Limberger, F. A.; Martin, R.; Nakanishi, R. U.; Neto, A. P.; Nonato, L. G.; Ohbuchi, R.; Pevzner, K.; Pickup, D.; Rosin, P.; Sharf, A.; Sun, L.; Sun, X.; Tari, S.; Unal, G.; Wilson, R. C. , Non-rigid 3D Shape Retrieval, pp.107-120, Proc. Eurographics Workshop on 3D Object Retrieval (EG 3DOR) 2015, Zurich, Switzerland, May 2-3, 2015, DOI:10.2312/3dor.20151064

Range-Scans based 3D Shape Retrieval: 1st place

The task of the Range-Scans based 3D Shape Retrieval track is to retrieve (complete) 3D shape based on a single view range-scan data. Six teams participated in this track. As the evaluation results show, we finished 1st in the track with quite a large margin.

We tried to exploit shape similarities among (full) 3D models as well as similarity between a single-view range-scan data (the query) and (full) 3D models in the database. To do so, we employed our Cross-Domain Manifold Ranking (CDMR) similarity metric learning algorithm. To compare a range-scan query with (full) 3D models, we used our view-based algorithm updated with the Super Vector Coding. To compare among (full) 3D models, we used our 3D Visual Feature Fusion (3DVFF) algorithm that effectively fuses multiple visual features.

Godil, A.; Dutagaci, H.; Bustos, B.; Choi, S.; Dong, S.; Furuya, T.; Li, H.; Link, N.; Moriyama, A.; Meruane, R.; Ohbuchi, R.; Paulus, D.; Schreck, T.; Seib, V.; Sipiran, I.; Yin, H.; Zhang, C., Range Scans based 3D Shape Retrieval, pp.153-160, Proc. Eurographics Workshop on 3D Object Retrieval (EG 3DOR) 2015, Zurich, Switzerland, May 2-3, 2015, DOI:10.2312/3dor.20151069

Lightweight binary voxel shape features for 3D data matching and retrieval

In this paper, we propose light weight features for voxel-based 3D shape definitions. The features, called 3DBRIEF and 3DORB, are inspired by simple light-weight 2D local image features BRIEF bay Calonder, et al and ORB by Rublee, et al. These features produces binary bitstring as their feature vector. They have small lower computational costs and lower memory footprints. We extend the 3DORB, which is the 3D version of ORB, for improved retrieval accuracy albeit higher computational cost. We evaluate the features in shape similarity based 3D model retrieval setting by using Bag-of-Features framework in Hamming space to aggregate a set of 3DORB or 3DBRIEF features extracted from a 3D model.

Takahiro Matsuda, Takahiko Furuya, Ryutarou Ohbuchi, Lightweight binary voxel shape features for 3D data matching and retrieval, Oral paper, Proc. First IEEE Int’l Conf. on Multimedia Big Data (BigMM) 2015, 20-22 April 2015, Beijing, China. (PDF)

2014年度 (fiscal 2014)

Hashing Cross-Modal Manifold for Scalable Sketh-based 3D Model Retrieval

Similarity metric learning performed across sketch and 3D model domains described in our previous paper (Springer MTAP journal) improves retrieval accuracy of sketch-based 3D model retrieval. However, it was rather slow for a 3D model database of substantial size, e.g., containing 100k or more 3D models.In this paper, we propose an alrogithm to speed up the retrieval phase of the cross-domain similarity metric learning algorithm by a combination of feature dimensionality reduction and hashing into Hamming (binary feature vector) space. Similarity computation between a pair of few hundred bit binary vectors in Hamming space is very fast so that a database having 100k or more 3D models can be searched in about a second. Also, small size of the binary vectors prompts on-main-memory or on-GPU-memory processing. The proposed algorithm also employs a set of improved view-based features based on the work reported in the BMVC 2014 paper. As a result, our proposed method is both accurate and efficient.

Takahiko FURUYA and Ryutarou OHBUCHI, Hashing Cross-Modal Manifold for Scalable Sketch-based 3D Model Retrieval, Oral paper, Proceedings of International Conference on 3D Vision (3DV) 2014, Tokyo, Japan, December 8-Decemer 11, 2014. (Paper_PDF, Talk slides_PDF)

A comparison of 3D shape retrieval methods based on a large-scale benchmark supporting multimodal queries

This article compares varisou methods for 3D model retrieval by using multimodal queries, e.g., by 3D model and by sketch.

Bo Li, Yijuan Lu, Chunyuan Li, Afzal Godil, Tobias Schreck, Masaki Aono, Martin Burtscher, Qiang Chen, Nihad Karim Chowdhury, Bin Fang, Hongbo Fu, Takahiko Furuya, Haisheng Li, Jianzhuang Liu, Henry Johan, Ryuichi Kosaka, Hitoshi Koyanagi, Ryutarou Ohbuchi, Atsushi Tatsuma, Yajuan Wan, Chaoli Zhang, Changqing Zou, A comparison of 3D shape retrieval methods based on a large-scale benchmark supporting multimodal queries, Computer Vision and Image Understanding, doi:10.1016/j.cviu.2014.10.006

Fusing Multiple Features for Shape-based 3D Model Retrieval

This work brings proposes Multi-Feature Manifold Ranking (MFAMR) algorithm for a joint distance metric learning among multiple feature vectors in high dimensional space. MFAMR, a variation of manifold-based distance metric learning algorithm, produces better overall distance (or similarity) among objects, each of which are described by more than one features, than simple linear combination of distances due to the features. For efficiency, anchoring is employed to reduce number of feature points to form the manifold.The paper also updates our BF-DSIFT (ca. 2009) algorithm to the year 2014, by employing Super Vector coding to aggregate local features (SIFT features) per 3D model, resulting in SV-DSIFT feature. In addition, the paper proposes LL-MO1SIFT for rigid object comparison by using Locally Linear Coding (LLC).

The combination of the MFAMR and two updated features, SV-DSIFT and LL-MO1SIFT, significantly improves accuracy of 3D-model to 3D-model comparison.

Takahiko FURUYA and Ryutarou OHBUCHI, Fusing Multiple Features for Shape-based 3D Model Retrieval, Oral paper, Proceedings of British Machine Vision Conference (BMVC) 2014, Nottingham, U.K., September 1-September 5, 2014. (PDF) (Acceptance rate is 7.7% (33/431) for oral papers and 30% (131/431) for combined poster and oral papers.)

Similarity Metric Learning on Cross-Domain Manifold for Sketch-based 3D Object Retrieval

Takahiko Furuya applied cross-domain similarity metric learning to improve accuracy of sketch-based 3D model retrieval. It relates sketches and 3D models, which are in different feature domains, by using sketch-to-sketch similarity, sketch-to-3D model similarity, and 3D model-to-3D model similarity. In addition, if available, class labels may be recruited for semi-supervised learning.

Takahiko FURUYA and Ryutarou OHBUCHI, Similarity Metric Learning for Sketch-based 3D Object Retrieval, Multimedia Tools and Applications (MTAP), Springer,

DOI: 10.1007/s11042-014-2171-3 (published online, July 2014) (PDF)

画像電子学会誌 (2013~2014年度) 最優秀論文賞を受賞!!

古屋 貴彦さんと大渕 竜太郎の執筆した以下の論文が,画像電子学会誌に2013~2014年度の2年間に掲載された全論文の中から2編以内の論文の著者らに贈られる「最優秀論文賞」を受賞しました.(2014年6月29日の受賞式の写真を追加しました.2014年7月1日更新)Following paper, co-authored by Takahiko Furuya and myself (Ryutarou Ohbuchi) received "Best paper"award from the Institute of Image and Electronics Engineers of Japan (IIEEJ). It is a biennial award given to two papers selected from all the papers published in the Journal of IIEEJ during the past two years, i.e., 2012 and 2013. (Updated: June 29, 2014 with the picture of award ceremony..)

Award ceremony on June 29, 2014.古屋貴彦,大渕竜太郎, 見かけ特徴の組み合わせと距離尺度の学習を用いた3次元形状類似検索, 画像電子学会誌, 第42巻,4号, pp.438-447, 2013年8月. (2012-1013年度 画像電子学会 最優秀論文賞を受賞)

Takahiko FURUYA,Ryutarou OHBUCHI, Visual Feature Combination and Distance Metric Learning for 3D Shape Retrieval, The journal of the Institute of Image Electronics Engineers of japan (Journal of the IIEEJ), Vol.42, No.4, pp.438-447, August 2013. (PDF in Japanese)

(http://www.iieej.org/gakkaishi3/IIEEJ_Vol42-No4ori.pdf) (Recieved "Best paper" award from Institute of Image Electronics Engineers of Japan (IIEEJ), which was awarded to two papers published during years 2012 and 2013. (A biannual award)

BF-DSIFT paper is one of the "Most cited papers beofore the era of ICMR" for CIVR 2009, according to ACM SIGMM records

古屋 貴彦さんと大渕がACM International Conference on Image and Video Retrieval (CIVR) 2009 で発表した以下の論文が,ACM SIGMM Records, Volume 6, Issue 1, March, 2014 の記事の中で「ICMRコンファレンス以前(のマルチメディア検索関連の国際学会)に最も参照された論文」の一つに選ばれました.具体的には,Google Scholarによる非参照数が,ACM CIVR 2009で発表された論文中4位(2014年2月17-18日現在で57件)だったそうです.(現在のACM Digital Libraryによる被参照数とGoogle Scholarによるひ参照数.) また,この論文の元になった,Osadaさんらの"Salient local visual feautres for shape-based 3D model retrieval" (IEEE SMI 2008 )も多くの論文に参照されています.

The paper below by Takahiko Furuya and Ryutarou Ohbuchi that appeared in Proceedings of the CIVR 2009 has been recognized as one of "Most cited papers before the era of ICMR" in an article by Dr. Erwin M. Bakker that appeared in ACM SIGMM Records, Volume 6, Issue 1, March, 2014 (ISSN 1947-4958). It is the 4th most cited paper among the papers accepted for CIVR 2009. It received 57 Google Scholar citations as of February 17-18, 2014, according to the article. Tha paper is a part of Takahiko Furuya's master's thesis research. (Here are current citation counts of this paper according to ACM Digital Library and Google Scholar.)

According to Google Scholar Metrics, as of 2015/04/15, ACM CIVR ranks 17th among the subcategory "Multimedia" of publication venues. The ranking includes both journals and conference proceedings. Of all the papers published at the ACM CIVR conferences, our paper published at CIVR 2009 is ranked 4th in terms of h5-index, again as of 2015/04/15.

The paper describes Bag-of-Features Dense SIFT (BF-DSIFT) algorithm for shape-based 3D model to 3D model comparison.

Takahiko Furuya, RyutarouOhbuchi, Dense Sampling and Fast Encoding for 3D Model Retrieval Using Bag-of-Visual Features, Proc. ACM International Conference on Image and Video Retrieval 2009 (CIVR 2009), July 8-10, 2009, Santorini, Greece, (2009) (PDF) (doi>10.1145/1646396.1646430) (Recognized as one of "Most cited papers before the era of ICMR" in ACM SIGMM Records, Volume 6, Issue 1, March 2014 (ISSN 1947-4598).

SHREC 2014: Extended Large Scale Sketch-Based 3D Shape Retrieval (We placed 2nd.)

スケッチを検索要求として3次元モデル検索の国際コンテストで2位!

This track of the SHape REtreival Contest 2014 (SHREC 2014) compared retrieval accuracy of sketch-based 3D model retrieval algorithms. Our algorithm by Takahiko Furuya placed 2nd. The first place went (again!) to our friends, Tatsuma and Aono's team at Toyohashi University of Technology. The algorithm by Tatsuma et al combined a powerful visual feature with a clever unsupervised distance metric learning algorithm. Tatsuma's method won by a large margine.Conglaturations (again!) to Prof. Aono and Prof. Tatsuma! (But we'll try to win the next time.)

B. Li, Y. Lu, C. Li, A. Godil, T. Schreck, M. Aono, M. Burtscher, H. Fu, T. Furuya, H. Johan, J. Liu, R. Ohbuchi, A. Tatsuma, and C. Zou , A. Extended Large Scale Sketch-Based 3D Shape Retrieval, Proc. Eurographics Workshop on 3D Object Retrieval 2014 (3DOR 2014), pp. 121-130, 2014. (DOI: 10.2312/3dor.20141058) (PDF) (full paper review)

SHREC 2014: Large Scale Comprehensive 3D Shape Retrieval (We placed 2nd.)

3次元モデルを検索要求として3次元モデルを検索する国際コンテストで2位!

This track of the SHape REtreival Contest 2014 (SHREC 2014) compared retrieval accuracy of 3D model retrieval algorithms that uses 3D model examples as queries.. Our algorithm by Takahiko Furuya placed 2nd. The first place went to our friends, Tatsuma and Aono's team at Toyohashi University of Technology, Japan. They also ranked first in the sketch-based retrieval track above. Conglaturations to Prof. Aono and Prof. Tatsuma! (But we'll try to win the next time.)Prof. Aono is a good friend of Ohbuchi's; we are ex-colleagues at IBM Research,

B. Li, Y. Lu, C. Li, A. Godil, T. Schreck, M. Aono, Q. Chen, N. K. Chowdhury, B. Fang, T. Furuya, H. Johan, R. Kosaka, H. Koyanagi, R. Ohbuchi, A. Tatsuma, Large Scale Comprehensive 3D Shape Retrieval, Proc. Eurographics Workshop on 3D Object Retrieval 2014 (3DOR 2014), pp. 131-140, 2014. (DOI: 10.2312/3dor.20141059) (PDF) (full paper review)

2013年度 (fiscal 2013)

A comparison of methods for sketch-based 3D shape retrieval

Sketch-based 3D shape retrieval has become an important research topic in content-based 3D object retrieval. To foster this research area, two Shape Retrieval Contest (SHREC) tracks on this topic have been organized by us in 2012 and 2013 based on a small-scale and large-scale benchmarks, respectively. Six and five (nine in total) distinct sketch-based 3D shape retrieval methods have competed each other in these two contests, respectively. To measure and compare the performance of the top participating and other existing promising sketch-based 3D shape retrieval methods and solicit the state-of-the-art approaches, we perform a more comprehensive comparison of fifteen best (four top participating algorithms and eleven additional state-of-the-art methods) retrieval methods by completing the evaluation of each method on both benchmarks. The benchmarks, results, and evaluation tools for the two tracks are publicly available on our websites [1,2].

Bo Li, Yijuan Lu, Afzal Godil, Tobias Schreck, Benjamin Bustos, Alfredo Ferreira, Takahiko Furuya, Manuel J. Fonseca, Henry Johan, Takahiro Matsuda, Ryutarou Ohbuchi, Pedro B. Pascoal, Jose M. Saavedra, A comparison of methods for sketch-based 3D shape retrieval, Computer Vision and Image Understanding (CVIU), Volume 119, February 2014, Pages 57–80, ISSN 1077-3142, (PDF) http://dx.doi.org/10.1016/j.cviu.2013.11.008

Visual Saliency Weighting and Cross-Domain Manifold Ranking for Sketch-based Image Retrieval

Retrieval of images (photos) presented with a line drawing sketch is not an easy task. Sketches vary from person to person, with wobbling lines, disconnected lines, different level of abstraction, different style, etc. In addition, person often sketch only an object of interest only, totally ignoreing background and accompanying (yet uninterested) objects. These background and uninterested objects in images get in the way of accurate comparison and retrieval. In this paper, we combine saliency detection algorithm with a distance metric learning algorithm using Cross-Domain Manifold Ranking for more accurate and effective sketch based image retrieval.

Takahiko Furuya, Ryutarou Ohbuchi, Visual Saliency Weighting and Cross-Domain Manifold Ranking for Sketch-based Image Retrieval, regular paper, Proc. Multi-Media Modeling (MMM) 2014, January, 2014, Dublin, Ireland. (PDF) Springer LNCS Volume 8325, 2014, pp 37-49, 2014.(http://link.springer.com/chapter/10.1007%2F978-3-319-04114-8_4)

多視点画像特徴の多様体を用いたスケッチによる3Dモデルの検索

NICOGRAPH 2013 で古屋 貴彦,松田 隆広,栗田 侑希紀,大渕 竜太郎の4名が,優れた論文に対して贈られるNICOGRAPH 2013 優秀論文賞を受賞しました!

Following conference paper, co-authored by Takahiko Furuya, Takahiro Matsuda, Yukinori Kurita, and Ryutarou Ohbuchi, has received "Best paper award" at NICOGRAPH 2013 conference held in Katsunuma, Japan from Nov.8~9, 2014.

古屋 貴彦, 松田 隆広, 栗田 侑希紀, 大渕 竜太郎, 多視点画像特徴の多様体を用いたスケッチによる3Dモデルの検索,NICOGRAPH 2013, 2013年11月8~9日. (NICOGRAPH 2013 優秀論文賞受賞) (PDF)

Ranking on Cross-Domain Manifold for Sketch-based 3D Model Retrieval

Sketch-based 3D model retrieval algorithms compare a query, a line drawing sketch, and 3D models for similarity by rendering the 3D models into line drawing-like images. Still, retrieval accuracies of previous algorithms remained low, as sets of features, one of sketches and the other of rendered images of 3D models, are quite different; they are said to lie in different domains. This paper proposes Cross-Domain Manifold Ranking (CDMR), an algorithm that effectively compares two sets of features that lie in different domains. Experimental evaluation by using sketch-based 3D model retrieval benchmarks showed that the CDMR is more accurate than state-of-the-art sketch-based 3D model retrieval algorithms.

Takahiko Furuya, Ryutarou Ohbuchi, Ranking on Cross-Domain Manifold for Sketch-based 3D Model Retrieval, regular paper, Proc. CyberWorlds 2013, pp. 274-281, Oct. 21-23, 2013, Tokyo, Japan. (PDF) (DOI:10.1109/CW.2013.60)

Talk slide Long (PDF)

CW 2013 talk slide (short) (PDF)

View-Clustering and Manifold Learning for Sketch-based 3D Model Retrieval

In this paper, we propose an algorithm that employs manifold-learning based dimension reduction for sketch-based 3D model retrieval. The algorithm compares multi-view rendering of 3D models with the 2D sketch. In order to lower the cost of training a manifold learning algorithm, namely, the LLE, we reduce number of training samples by clustering, either in feature space or in view space. Experimental evaluation has shown that both view space clustering and feature space clustering lowers training cost by more than 10 times while significantly improving retrieval accuracy. A compact 50 dimensional feature after the dimension reduction is much faster to compare, and its retrieval accuracy is 40% better than the original 30k dimensional feature.

Yukinori Kurita, Ryutarou Ohbuchi, View-Clustering and Manifold Learning for Sketch-based 3D Model Retrieval, short paper, Proc. CyberWorlds 2013, pp. 282-285, Oct. 21-23, 2013, Tokyo, Japan. (PDF) (DOI: 10.1109/CW.2013.70)

見かけ特徴の組み合わせと距離尺度の学習を用いた3次元形状類似検索 (Visual Feature Combination and Distance Metric Learning for 3D Shape Retrieval)

画像電子学会誌 (2012~2013年度) 最優秀論文賞を受賞!!

古屋 貴彦さんと大渕 竜太郎の執筆した以下の論文が,画像電子学会誌に2012~2013年度の2年間に掲載された全論文の中から2編以内の論文の著者らに贈られる「最優秀論文賞」を受賞しました.(2014年5月30日更新)

This paper describes a shape-based 3D model retrieval algorithm that employs multi-view rendering and densely-sampled local visual features, the BF-DSIFT, to compare 3D models. To further boost retrieval accuracy, the algorithm uses manifold-based distance metric learning and fursion of multiple features. Experimental evaluation of retrieval accuracy is conducted by using multiple benchmark databases.

古屋貴彦,大渕竜太郎, 見かけ特徴の組み合わせと距離尺度の学習を用いた3次元形状類似検索, 画像電子学会誌, 第42巻,4号, pp.438-447, 2013年8月.(2012-1013年度 画像電子学会 最優秀論文賞を受賞)

Takahiko FURUYA,Ryutarou OHBUCHI, Visual Feature Combination and Distance Metric Learning for 3D Shape Retrieval, The journal of the Institute of Image Electronics Engineers of Japan (Journal of the IIEEJ), Vol.42, No.4, pp.438-447, August 2013. (PDF in Japanese)

(http://www.iieej.org/gakkaishi3/IIEEJ_Vol42-No4ori.pdf) (Recipient of "Best paper" award from Institute of Image Electronics Engineers of Japan (IIEEJ), which is awarted to two papers published during years 2012 and 2013. (A biannual award).)

DENSELY SAMPLED LOCAL VISUAL FEATURES ON 3D MESH FOR RETRIEVAL

Local Depth-SIFT (LD-SIFT) algorithm by Darom, et al.has shown good retrieval accuracy for 3D models defined as densely sampled manifold mesh. However, it has two shortcomings. The LD-SIFT requires the input mesh to be densely and evenly sampled. Furthermore, the LD-SIFT can’t handle 3D models defined as a set of multiple connected components or a polygon soup. This paper proposes two extensions to the LD-SIFT to alleviate these weaknesses. First extension shuns interest point detection, and employs dense sampling on the mesh. Second extension employs remeshing by dense sample points followed by interest point detection a la LD-SIFT. Experiments using three different benchmark databases showed that the proposed algorithms significantly outperform the LD-SIFT in terms of retrieval accuracy.

Yuya Ohishi, Ryutarou Ohbuchi, DENSELY SAMPLED LOCAL VISUAL FEATURES ON 3D MESH FOR RETRIEVAL, short paper, Proc. WIAMIS 2013, pp.1-4, July 3-5, 2013, Paris, France. (PDF) (DOI: 10.1109/WIAMIS.2013.6616166)

クロスドメイン多様体ランキングを用いたスケッチによる3Dモデルの検索

Visual ComputingグラフィクスとCAD合同シンポジウムにおける優れた発表に対し,古屋 貴彦さんが情報処理学会・グラフィクスとCAD研究会優秀研究発表賞を受賞しました.

Takahiko Furuya, lead author and presentor of the followng paper, received "IPSJ SIG on Graphics and CAD outstanding research presentation" award at the Joint Visual Computing/Graphics & CAD Symposium 2013 held in Aomori, Japan during June 22~June 23, 2013.

古屋 貴彦, 大渕 竜太郎, クロスドメイン多様体ランキングを用いたスケッチによる3Dモデルの検索,Proc. Visual ComputingグラフィクスとCAD合同シンポジウム 2013, 2013年6月22日~23日. (情報処理学会・グラフィクスとCAD研究会優秀研究発表賞受賞)

左下の写真は,2014年6月28日に行われた授賞式の模様です.

The picture to the left is of award ceremony held on June 28, 2014.

2012年度

ICPR 2012 Tutorial AM-04 (ICPR 2012 でチュートリアル講義を行いました)

3D Shape Analysis and Retrieval - Recent Advances and Trends (3次元形状解析と検索 ‐ 最近の動向 ‐)

Tutorial slides (at Google Sites)

Lecturer:

Hamid Laga* and RyutarouOhbuchi** (講師:ハミド ラーガさんと大渕)

*School of Mathematics and Statistics, University of South Australia, Australia

**Computer Science and Engineering Department, University of Yamanashi, Japan

Local Geometry Adaptive Manifold Re-Ranking for Shape-Based 3D Object Retrieva

This paper proposes an improvement to Manifold Ranking algorithm used for search results ranking in the context of shape-based 3D model retrieval. Manifold Ranking algorithm by Zhou et al estimates, given a set of high-dimensional feature vectors, a lower-dimensional manifold on which the features lie. It then computes diffusion-based distances from a feature vector (or feature vectors) to the other feature vectors on the manifold. When applied to content-based retrieval, overall retrieval accuracy is significantly better than a “simple” fixed distance metric. However, in a small neighborhood of query, retrieval ranks obtained by a “simple” distance metric (e.g., L1-norm) performs better than those obtained by Manifold Ranking. Proposed re-ranking algorithm tries to combine ranking results due to both simple distance metric and Manifold Ranking in an automatic query expansion framework for better ranking results. Experimental evaluation has shown that the proposed method is effective in improving retrieval accuracy.

Ryutarou Ohbuchi, Yukinori Kurita, Local Geometry Adaptive Manifold Re-Ranking for Shape-Based 3D Object Retrieval, in Proc. ACM Multimedia 2012 (ACM MM 2012), short paper, pp. 901-904, Oct. 29-Nov. 2, 2012, Nara, Japan. (PDF) (DOI: 10.1145/2393347.2396342)

A comparison of methods for non-rigid 3D shape retrieval

Non-rigid 3D shape retrieval has become an active and important research topic in content-based 3D object retrieval. The aim of this paper is to measure and compare the performance of state-of-the-art methods for non-rigid 3D shape retrieval. The paper develops a new benchmark consisting of 600 non-rigid 3D watertight meshes, which are equally classified into 30 categories, to carry out experiments for 11 different algorithms, whose retrieval accuracies are evaluated using six commonly utilized measures. Models and evaluation tools of the new benchmark are publicly available on our web site [1]

Zhouhui Lian, Afzal Godil, Benjamin Bustos, Mohamed Daoudi, Jeroen Hermans, Shun Kawamura, Yukinori Kurita, Guillaume Lavoué, Hien Van Nguyen, Ryutarou Ohbuchi, Yuki Ohkita, Yuya Ohishi, Fatih Porikli, Martin Reuter, Ivan Sipiran, Dirk Smeets, Paul Suetens, Hedi Tabia, Dirk Vandermeulen, A comparison of methods for non-rigid 3D shape retrieval, Pattern Recognition, Volume 46, Issue 1, Elsevier, January, 2013, ISSN 0031-3203, DOI:10.1016/j.patcog.2012.07.014., URL: www.sciencedirect.com/science/article/pii/S0031320312003305 (PDF)

Non-rigid 3D Model Retrieval Using Set of Local Statistical Features

In this paper, we present a 3D model retrieval algorithm that employs a bag of 3D local geometrical features.The local feature is a statistical feature computed from oriented point set. It is inherently invariant to translation, (uniform) scaling, and rotation. For non-rigid model retrieval, the algorithm achieves good retrieval accuracy.

This paper is a condensed version of Yuki Ohkita's bachelor's thesis, who graduated on March 2009.

Yuki Ohkita, Yuya Ohishi, Takahiko Furuya, Ryutarou Ohbuchi, Non-rigid 3D Model Retrieval Using Set of Local Statistical Features, Proc. IEEE ICME 2012 Workshop on Hot Topics in 3D Multimedia (Hot3D), July 9, 2012, Melbourne Australia, DOI:10.1109/ICMEW.2012.109 (PDF)

Supervised, geometry-aware segmentation of 3D mesh models

This work presents a supervised segmentation algorithm for 3D manifold mesh models. User teaches the system a segment by painting, a small subset of polygons of a mesh. After a few segments are specified, the system runs semi-supervised learning algorithm guided by local surface geometrical features to propagate labels over the 3D model manifold. We used an algorithm that mimic diffusion-like process on the manifold surface, Manifold Ranking by Zhou, et al. for the propagation.

This paper is a summary of Keisuke Banba's Master's thesis, who graduated on March, 2011.

Keisuke Banba, Ryutarou Ohbuchi, Supervised, geometry-aware segmentation of 3D mesh models, Proc. IEEE ICME 2012 Workshop on Human-Focused Communications in the 3D Continuum, July 9, 2012, Melbourne Australia. DOI: 10.1109/ICMEW.2012.16 (PDF)

Local Geometrical Feature with Positional Context for Shape-based 3D Model Retrieval

A set of local distance histograms computed on manifld 3D mesh surfaces can be quite effective in comparing deformable or articulated models. However, these features are often not discriminative enough. For example, these features would give the same set of features even if the 3D model goes through volume-changing deformations so far as the surface distances are preserved. In this work, we tried to combine local geometrical feature computed in local 3D Euclidian space with local distance histograms. We also attempted to make the features computable on a multiply-connected or non-manifold 3D models by remeshing the surfaces into singly connected mesh.

This work is a summary of a half of Shun Kawamura's master's thesis, who graduated on March 2011. He also worked on Shape-Based Autotagging of 3D Models (PDF).

Shun Kawamura, Kazuya Usui, Takahiko Furuya and Ryutarou Ohbuchi, Local Geometrical Feature with Positional Context for Shape-based 3D Model Retrieval, poster paper, Proc. Eurographics 2012 Workshop on 3D Object Retrieval, pp.55-58,DOI: 10.2312/3DOR/3DOR12/055-058, 2012.. (PDF)

2011年度

SHREC 2012 Shape Retrieval Contest (February, 2012)

Shape Retrieval Contest based on Generic 3D Dataset

We placed 1st in this track. Mr. Tomohiro Yanagimachi pushed the effort. Details of the performance evaluation results, with recall-precision plots and a table of performance indices are found at an web site at NIST. Our best performing variation, named Yanagimachi(DG1SIFT), in the evaluation result, used the BF-DSIFT combined a few more features, combined with distance metric learning based on slightly modified Manifold Learning. However, least powerful of our three entries, BF-DSIFT alone combined with slightly modified Manifold Learning, wins over the others.

2nd place went to Mr. A. Tatsuma and Prof. M. Aono, who are friend of myself. Prof. Aono and I worked together for IBM Research Lab. in Tokyo.

我々のチームが1位!柳町 知宏さんが中心になって頑張ってくれました.詳細はこのページのグラフや表を見てください.一般的3次元モデルを対象とした本部門で,古屋 貴彦さん(2010年3月卒業)が開発したBF-DSIFT手法に改良型Manifold Learningを加えただけのYanagimachi(DSIFT)で1位.さらに,これにBF-GSIFT,1SIFTなどの特徴を組み合わせたYanagimachi(DG1SIFT)ではさらに他を引き離しました.

2位も日本チーム!2位になった豊橋技術科学大学のTatsuma(立間さん)さんと青野 雅樹教授は大渕のお友達.(青野さん大渕はIBM東京基礎研究所で同じグループでした!)

B. Li, A. Godil, M. Aono, X. Bai, T. Furuya, L. Li, R. López-Sastre, H. Johan, R. Ohbuchi, C. Redondo-Cabrera, A. Tatsuma, T. Yanagimachi, S. Zhang, SHREC’12 Track: Generic 3D Shape Retrieval, Proc. Eurographics Workshop on 3D Object Retrieval, May , 2012. (DOI: 10.2312/3DOR/3DOR12/119-126) (PDF)

Sketch-Based 3D Shape Retrieval

We entered the sketch-based 3D shape retrieval track with a quick hack, a slightly modified version of the algorithm described in this paper. It is not designed for sketch based retrieval, so the result is expected.

Obviously, we have a lot to cover in this track.

(If we are to make excuses for our poor showing, our algorithm is designed to accept polygon soup, point set, and other 3D models. Top contenders, on the other hand, are tuned for oriented, manifold meshes, which allows for additional feature extraction methods.)

B. Li, A. Godil, T. Schreck, M. Alexa, T. Boubekeur, B. Bustos, J. Chen, M. Eitz, T. Furuya, K. Hildebrand, S. Huang, H. Johan, A. Kuijper, R. Ohbuchi, R. Richter, J. M. Saavedra, M. Sherer, T. Yanagimachi, G. J. Yoon, S. M. Yoon, SHREC’12 Track: Sketch-Based 3D Shape Retrievall, Proc. Eurographics Workshop on 3D Object Retrieval, May , 2012. (DOI: 10.2312/3DOR/3DOR12/109-118) (PDF)

Clustering to reduce training samples for manifold learning algorithms.

Megumi Endoh, Tomohiro Yanagimachi, Ryutarou Ohbuchi, Efficient manifold larning for 3D model retrieval by using clustering-based training sample reduction, poster paper, Proc. IEEE Int'l Conf. on Acoustics, Speech, and Signal Processing 2012 (IEEE ICASSP 2012), Kyoto, Japan, March 2012 (2012). (DOI: 10.1109/ICASSP.2012.6288385) (PDF)

2010年度

Distance metric learning via manifold learning for 3D model retrieval, with a small trick.

Manifold learning algorithm by Zhou et al. may not work as intended for some of high dimensional features, e.g. those produced by a large vocabulary bag-of-features approach. We report an emprical technique to resolve that issue in 3D model retrieval setting. We used the method for the Generic 3D Warehouse, Non-rigid shapes, and Range-scans tracks of the SHREC 2010 3D model retrieval contest.

Ryutarou Ohbuchi, Takahiko Furuya, Distance Metric Learning and Feature Combination for Shape-Based 3D Model Retrieval, poster paper, Proceedings of the ACM workshop on 3D object retrieval 2010, Firenze, Italy, (2010). (PDF)

Dimension reduction of bag-of-visual features for 3D model retrieval improves retrieval performance, while reducing the size of feature 1/10.

Our bag-of-visual features approach for 3D model retrieval produces features having high dimensionality, e.g., 30k dimensions. Reduction in dimensionality is desired for faster comparison and compact storage. We applied unsupervised and supervised dimension reduction algorithms, and succedded in producing compact yet more salient feature vector. The compressed feature is about 10 times the size of the original, while the retrieval performance supassed that of the original.

For the non-rigid models of the McGill Shape Benchmark (MSB), supervised learning yielded near-perfect score of R-precision=99.8%. For the SHREC 2007 CAD model, BF-DSIFT achieved R-Precision=82% after surpervised learning. With a difficult and diverse SHREC 2006 benchmark, we achieved R-Precision=65.6% after semi-supervised learning.

我々が提案した3次元形状モデル検索の為の見かけに基づく特徴は,Bag-of-Featuresのヒストグラムとして特徴を求めます.その特徴は次元が高く(3万次元程度),またゼロ要素が多いものでした.特徴をコンパクトにしてその蓄積コストと検索の計算量を下げること,さらに,複数の意味カテゴリ(100個程度)を考慮した検索を実現すること,の2つを目的として,教師なし,および教師付き,の次元削減を試みました.その結果,検索性能が向上し,かつ,特徴量の次元を大幅に下げることに成功しました

Ryutarou Ohbuchi, Masaki Tezuka, Takahiko Furuya, Takashi Oyobe, Squeezing Bag-of-Features for Scalable and Semantic 3D Model Retrieval, Proc. 8th International Workshop on Content-Based Multimedia Indexing (CBMI) 2010, 23-25 June 2010, Grenoble, France. (PDF)

3次元形状の検索技術に関して「3次元画像コンファレンス」で招待講演します.

大渕 竜太郎,「形状の類似性による3次元モデルの検索」,3次元画像コンファレンス招待講演予稿.(2ページ)[Draft PDF]

3次元形状の検索技術に関する解説記事です.

大渕 竜太郎,「3次元形状の検索」,マルチメディア検索の最先端 第7回,映像情報メディア学会誌,Vol. 64, No. 7, (2010年7月号) (6ページ)[Draft PDF]

We won some of the Shape REtrieval Contest (SHREC 2010) tracks (3次元モデル検索の国際コンテストにおいて,複数部門で1位~2位を獲得!)

We have entered this year's SHape REtrieval Contest (SHREC 2010), which compares retrieval performance and other features of 3D model retrieval algorithms. This year, the contest included 8 tracks, such as 3D Generic 3D Warehouse, Non-rigid shapes, Range-scans, Large Scale Database, Protein Models, Part Correspondence, Architectural models, etc. We entered the first four of the tracks listed above; Generic 3D Warehouse, Non-rigid shapes, Range-scans, and Large Scale Database,

We either tied for the 1st place or came in 2nd place in all the four tracks we entered.

3次元モデル検索の国際コンテストSHREC(SHape REtrieval Contest)が今年も開催され,我々もGeneric 3D Warehouse, Non-rigid shapes, Range-scans, および Large Scale Databaseの4つの部門に参加しました.その4部門の全てで,1位または2位の好成績を収めました!

Range-scans track and its results (results in Excel file) (レンジスキャン3Dモデル検索部門)

We came in the first place, with a large margin, among two entrants. We used the method we published at ICCV S3D (PDF), a derivative of the BF-DSIFT, for the track, with a small modification. On top of the method described in the ICCV S3D paper (PDF), we applied morphological filtering (e.g., dilation or closing) on rendered range data in an attempt to reduce effects of cracks and gaps in the range mesh. The morphological filter helped a bit in improving retrieval performance.

レンジスキャンによる検索の部門には2チームが参加し,我々が大差で1位です.(結果)

Non-rigid shapes track and its results (非剛体3Dモデル検索部門)

This track compares retrieval performance of deformable / articulated 3D shapes. So a global shape descriptor geared for a rigid shape is not useful.

非剛体形状を検索する部門では,1位タイでした.(結果)全く異なる手法と1位を分けたのが面白いところです.

形状表現に対する汎用性という点では我々の手法が優位です.我々の手法は多様な形状表現(ポリゴンスープ,多様体メッシュ,点群,...)などに対応出来ます.しかし,同着1位のSmeetさんらの手法は,ほぼ,多様体メッシュに限定されます.

検索処理の手間・処理時間の点でもわれわれの手法は高速です.検索要求の提示から検索結果が戻るまで2~3秒です.

We tied for the first place among three entrants. The contest results was quite interesting, in that our completely (2D) appearance based method performed equally well with a method by Smeets et al that extracts feature on 2D manifold mesh embedded in 3D space.

Note that we used the same BF-DSIFT method combined with Manifold Ranking for the articulated shapes of this non-rigid shapes track and for the rigid models of the Generic 3D Warehouse track.

Our method is also computationally efficient. A query will be processed in 2~3 seconds.

Z. Lian, A. Godil, T. Fabry, T. Furuya, J. Hermans, R. Ohbuchi, C. Shu, D. Smeets, P. Suetens, D. Vandermeulen, S. Wuhrer. SHREC’10 Track: Non-rigid 3D Shape Retrieval. accepted, Eurographics Workshop on 3D Object Retrieval, 2010. [Draft pdf] [Results]

Generic 3D warehouse track and its results (results in Excel file) (多様な一般の3Dモデル検索部門)

This track comapres retrieval performance of rigid 3D models crawled from Google 3D Warehouse.

As noted above, we used the same BF-DSIFT method combined with Manifold Ranking for the articulated shapes of the non-rigid shapes track above as well as for the rigid models of this Generic 3D Warehouse track.

The BF-DSIFT accepts a diverse set of shape representations, such as polygons soup, manifold mesh, point set, etc., so far as they can be rendered as range images. Yet the method is quite powerful in terms of retrieval performance. It is also efficient, for it takes only a few second per query for a 1000model database. (Other methods having comparable retrieval performance takes much longer to process a query.)

Large Scale Database (大規模3Dモデルデータベース検索部門)

This track compares retrieval performance of rigid 3D models using a larger, 10,000 model database. The 90% of the database, however, consists of junk 3D models, i.e.,"exploded" fragments of triangles filling an entire bounding box. Actual "meaningful" 3D models are only about 10% of the 10,000 models.

We used, again, the same BF-DSIFT algorithm for this track, but without the Manifold Ranking this time. The manifold ranking is based on global distribution of features that forms "feature manifold", and the garbage 3D models affected its manifold. Manifold ranking becomes computationally expensive as the number of features to be considered increases.

2009年度

Shape-based Autotagging of 3D Models for Retrieval

(形状による自動タグ付けを用いたキーワードによる3Dモデル検索)

Specification of a query is one of the most fundamental issues in retrieving multimedia objects such as images and 3D geometric models. While query by 3D model example (or 2D sketch example) of a desired shape is quite effective, text based search and retrieval of 3D models, or Query By Text (QBT) approach, has its set of advantages. For example, specifying semantics or intention may be easier than by query by shape example. An issue in the QBT approach is the lack of labeled 3D models. This paper discusses a method to add text tags to 3D models without tags. Given a set of 3D models with tags, that is, the labeled training set, the method propagate the likelihoods, or Tag Relevance Ranks, of the tags by means of multiple overlapped manifold ranking [Zhou03] to the other models.

Ryutarou Ohbuchi and Shun Kawamura, Shape-Based Autotagging of 3D Models for Retrieval, 4th International Conference on Semantic and Digital Media Technologies (SAMT 2009), Graz, Austria, Dec. 2-4, 2009. Lecture Notes in Computer Science, Volume5887/2009, Springer (PDF)

Scale-Weighted Dense Bag of Visual Features for 3D Model Retrieval from a Partial View 3D Model

(スケール重み付けを用いた密サンプル視覚特徴集合を用いた部分視点深さデータからの3次元モデル検索)

This paper describes a method for searching full 3D models from a range scanned 3D mesh from a viewpoint. The method is bases on the 3D model retrieval algorithm based on local visual features we have publised previously (Ohbuchi_SMI08). However, significant changes are made to deal with issues associated with range scanning, such as cracks and jagged edges of the query 3D mesh. Those changes include dense and random placement of Lowe's Scale Invariant Feature Transform (SIFT) sample points, as well as an importance sampling of lower-frequency, i.e., larger-scale, images of the Gaussian image pyramid used in the SIFT. Experimental evaluation showed that the method significantly ourperformed the methods in SHREC 2009 Partial View Retrieval track (organized by Afzal Godil, et al.); the proposed method scored at Mean First Tier = 37%, whereas the two entries to the SHREC scored 15% less, at Mean First Tier = 22%.

Ryutarou Ohbuchi and Takahiko Furuya, Scale-Weighted Dense Bag of Visual Features for 3D Model Retrieval from a Partial View 3D Model, Proc. IEEE ICCV 2009 workshop on Search in 3D and Video (S3DV) 2009, Sept. 27, Kyoto, Japan. (PDF)

Dense Sampling and Fast Encoding for 3D Model Retrieval Using Bag-of-Visual Features

(密なサンプリングと高速な符号化を適用したバグオブフィーチャーズ法による3次元モデルの検索)

We have improved execution speed and retrieval performances of the 3D model retrieval algorithm based on local visual features we have publised previously (Ohbuchi_SMI08). The method employs dense random sampling of SIFT features in the multi-view images to capture more features than the original, salient-point based method. To compensate for the increased cost of computation due to much larger number of local visual features, we adopted both GPU based feature extraction [Ohbuchi_S-3D_08] and a fast randomized decision tree algorithm for codebook learning and visual word encoding.

Takahiko Furuya, RyutarouOhbuchi, Dense Sampling and Fast Encoding for 3D Model Retrieval Using Bag-of-Visual Features, Proc. ACM International Conference on Image and Video Retrieval 2009 (CIVR 2009), July 8-10, 2009, Santorini, Greece, (2009) (PDF)(doi>10.1145/1646396.1646430) (Recognized as one of "Most cited papers before the era of ICMR" in ACM SIGMM Records, Volume 6, Issue 1, March 2014 (ISSN 1947-4598).

2008年度

Accelerating Bag-of-Features SIFT Algorithm for 3D Model Retrieval

(SIFT特徴とバグオブフィーチャーズを用いた3Dモデル検索の高速化)

We accelerated some of the steps for the Bag-of-Features SIFT algorithm for 3D model retrieval (Ohbuchi_SMI08) we have published at the SMI 2008. We adopted Wu's algorithm for GPU-based SIFT feature extraction and table lookup for distance computation for a significant speedup without degradation in retrieval performance.

RyutarouOhbuchi, Takahiko Furuya, Accelerating Bag-of-Features SIFT Algorithm for 3D Model Retrieval, Proceedings of the SAMT 2008 Workshop on Semantic 3D Media, Koblenz, Germany, 2008, Dec. 3, 2008, pp. 23-30, (2008) (PDF)

Ranking on Semantic Manifold for Semantic 3D Model Retrieval

(意味多様体上でのランキングを用いた意味的な3次元モデル検索)

Shape-based comparisons of 3D models is affected by shapes as well as semantics of the 3D models. The semantics may be classified into long-term, well-established, share semantics and per-query-session semantics (or intention). Previously, most of so-called "semantic" retrieval algorithms took only one of these types of semantics into consideration. Our novel approach combines a set of long-term, established semantic classes with per-query-session semantics for 3D model retrieval. Our method first learns, off-line, a set of multiple semantic classes by using our semi-supervised dimension reduction approach Ohbuchi, MIR07] to produce a "semantic manifold" from the input, ambient features. The method then applies manifold ranking-based relevance feedback (RF) on the semantic manifold. Our experimental evaluation showed that the RF using manifold ranking performed on the semantic manifold far outpeforms the same applied to the original ambient feature space.

Ryutarou Ohbuchi, Toshiya Shimizu, Ranking on Semantic Manifold for Semantic 3D Model Retrieval, an oral paper, ACM MIR 2008, Vancouver, Canada, Oct. 2008. (PDF) (Acceptance ratio, including poster papers, is 21%)

We won the SHape REtrieval Contest (SHREC) 2008 CAD and Generic Models tracks

(SHREC2008のCADモデルおよび一般・多様モデル部門で優勝!)

We won the two tracks in the SHape REtrieval Contest (SHREC) 2008, the CAD Model Track 2008 and Generic Models Track. We entered the two tracks using our three algorithms.

CAD Model Track (https://engineering.purdue.edu/PRECISE/shrec08)

(CADモデル部門)

We won the CAD Model Track 2 consecutive years.

U_Yama_A won with a very large margin as the graph shows. For the details, see the SHREC 2008 CAD track page.

U_Yama_A Akihiro Yamamoto, Masaki Tezuka, Toshiya Shimizu, Ryutarou Ohbuchi, SHREC'08 Entry: Semi-Supervised Learning for Semantic 3D Model Retrieval, pp 241-243, Proc. IEEE Shape Modeling International (SMI) 2008, June 4-6, Stony Brook, NY, USA (2008) (PDF).

U_Yama_B*Kunio Osada, Takahiko Furuya, Ryutarou Ohbuchi, SHREC'08 Entry: Local 2D Visual Features for CAD Model Retrieval, pp 237-238, Proc. IEEE Shape Modeling International (SMI) 2008, June 4-6, Stony Brook, NY, USA (2008) (PDF).

* Two methods named "U_Yama_B" that appear in the Generic models track and the CAD Model Track are different. The U_Yama_A methods in the two tracks, on the other hand, are the the same.

Generic Models Track (多様・一般モデル検索部門)

U_Yama_A (the same method as the U_Yama_A) won the query set 1.

U_Yama_B (different method from the U_Yama_B for the CAD model track) won the query set 2.

U_Yama_A Akihiro Yamamoto, Masaki Tezuka, Toshiya Shimizu, Ryutarou Ohbuchi, SHREC'08 Entry: Semi-Supervised Learning for Semantic 3D Model Retrieval, pp 241-243, Proc. IEEE Shape Modeling International (SMI) 2008, June 4-6, Stony Brook, NY, USA (2008) (PDF).

U_Yama_B*Kunio Osada, Takahiko Furuya, Ryutarou Ohbuchi, SHREC'08 Entry: Local Volumetric Features for 3D Model Retrieval, pp 245-246, Proc. IEEE Shape Modeling International (SMI) 2008, June 4-6, Stony Brook, NY, USA (2008) (PDF).

* Two methods named "U_Yama_B" that appear in the Generic models track and the CAD Model Track are different. The U_Yama_A methods in the two tracks, on the other hand, are the the same.

Salient local visual features for shape-based 3D model retrieval

(顕著点における局所特徴を用いた3Dモデル形状類似検索)

We have developed a method to compare 3D models by their shape using a set of local visual features. Our paper has been accepted as a regular paper for the SMI 2008. By using local visual features, the method performs very well in comparing shapes of articulated or deformable shapes, such as human beings, snakes, insects, etc. Evaluated by using the McGill 3D Shape Benchmark for the articulated figures, it far outperformedall the global shape features we tested.

For the rigid shapes, evaluated by using the Princeton Shape Benchmark, the method performs about as well as some of the most powerful method employing global shape features. This paper has received quite a few citations (i.e., >170, as of April, 2014) according to Google Scholar.

Ryutarou Ohbuchi, Kunio Osada, Takahiko Furuya, Tomohisa Banno, Salient local visual featuers for shape-based 3D model retrieval, pp 93-102, Proc. IEEE Shape Modeling International (SMI) 2008, June 4-6, Stony Brook, NY, USA.(PDF)

2007年度

Off-line supervised learning of semantic categories for semantic 3D model retrieval

(意味カテゴリのオフライン教師付き学習を用いた3次元モデルの意味的な検索)

Similarities among 3D mordel "shapes" are often influenced by their semantics as much as their shape. Semantic influcence may be captured by on-line learning via relevance feedback, or by off-line learning of semantic categories in a training database.

In this study, we have used a semi-supervised off-line learning of semantic categories to boost 3D model retrieval performance. The method and experimental evaluation results are described in the paper below. It is a draft of a paper accepted as an oral paper for the 9th ACM SIGMM International Workshop on Multimedia Information Retrieval (ACM MIR 2007) to be held in Augsburg, Germany. This research a part of Akihiro Yamamoto's Master's thesis work.

半教師付き学習をつかって意味カテゴリを学習することにより,3次元モデルデータベースの検索性能を上げることに成功しました.この手法は,9th ACM SIGMM International Workshop on Multimedia Information Retrieval (ACM MIR 2007)の口頭発表として採録された論文 (PDF)に述べてあります.

Ryutarou Ohbuchi, Akihiro Yamamoto, Jun Kobayashi, Learning semantic categories for 3D Model Retrieval, accepted, Proc. ACM MIR 2007, Augsburg, Germany, pp. 31-40, Oct. 2007. (PDF).

Performance of this 3D model retrieval method was measured using SHREC 2006, and is listed here.

The new method outperforms our previous method by about 15% in terms of Mean First Tier (Highly relevant) measure (57.9% for the new method), or the Mean Average Precision measure (0.618 for the new method).

Our new 3D model retrieval method (Method_A in the list) that employes off-line supervised learning of semantic categories is evaluated by using SHREC 2006 benchmark.It outperforms our previous method (based on unsupervised learning) as well as all the methods that entered the SHREC 2006 by significant margin.

We are the No.1 in the SHREC 2007 CAD model track

3次元CADモデル検索コンテスト(SHREC2007 CADトラック)で我々が優勝!

We won the SHREC 2007 (Shape Retrieval Contest) CAD model track. Our method won the competition by a significant margin, as the result shows.

The method we have employed is described in this short paper for the SHREC 2007 report. The contest's results are presented at a speciall session at the Shape Modeling International 2007 (SMI 2007,) in Lyon, France.

We basically used the feature dimension reduction based on unsupervised learning described in the papers (ACM MIR 2006, R. Ohbuchi, J. Kobayashi, PDF) and (AMR 2007, R. Ohbuchi, J. Kobayashi, A. Yamamoto, PDF).

We also used the multiresolution shape comparison approach we have proviously proposed (R. Ohbuchi, T. Takei, PG 2003, PDF).

It should be noted that we did not use "CAD models" for the learning; we used 5,000 "generic" 3D models to train the Locally Linear Embedding algorithm by Roweis et al.

SHREC 2007 3Dモデル検索コンテストの CADモデル分野で1位になりました~!順位はここを見てください.

手法はこの論文に書かれています.教師無し学習を用いて多数の3次元モデルの特徴から,これら特徴の張る部分空間を学習し,その部分空間の測地線距離を用いる方法です.基本的なアプローチについてはこのMIR2006の論文に書かれています.

SHREC2007の構成とその結果について,詳しくは,

http://www.aimatshape.net/event/SHREC/UU-CS-2007-015.pdf

を参照してください.

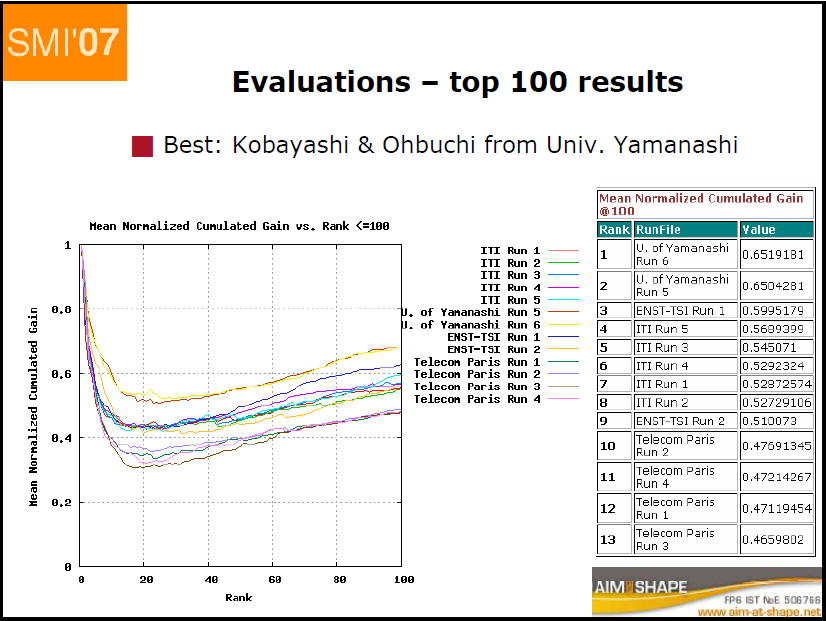

Mean Average Precision(relevant) Rank RunFile Value 1 U. of Yamanashi Run 6 0.43371364 2 U. of Yamanashi Run 5 0.43185356 3 ENST-TSI Run 1 0.3787826 4 ITI Run 4 0.33206695 5 ITI Run 3 0.32480213 Jun Kobayashi, Akihiro Yamamoto, Toshiya Shimizu, Ryutarou Ohbuchi, A database-adaptive distance measure for 3D model retrieval, SHREC 2007 CAD track paper, SMI 2007, June 2007 (PDF)

Comparison of dimension reduction method for 3D model retrieval

(3Dモデル検索のための次元削減手法の比較)

Our paper that comapred the dimension reduction methods have been presented at the AMR 2007 workshop in Paris, France in July.

Ryutarou Ohbuchi, Jun Kobayashi, Akihiro Yamamoto, and Toshiya Shimizu, Comparison of dimension reduction method for database-adaptive 3D model retrieval, In Proc. Fifth International Workshop on Adaptive Multimedia Retrieval (AMR 2007), Paris, France, July 2007.In Proceedings of the Fifth International Workshop on Adaptive Multimedia Retrieval (AMR 2007), Paris, France, July 2007.(PDF)

Tatsuma Atsushi's 3D model retrieval page

Our friend Tatsuma Atsushi has several powerful 3D model retrieval methodls.

It appears that one of his methods would have tied No.1 in the SHREC 2007 Face retrieval contest if his method were to enter the track.

The method achieved NDCG@25 of 0.6379944 using the SHREC 2006 benchmark. This is quite remarkable.

He also has a nifty online 3D model retrieval demo "3Doodle".

OpenCampusTest

Autoencoder

Classify2D

Cloth

Digit

Natural

![]() My Background

My Background

I earned my Ph.D degree at Computer Science Department at the UNC-Chapel Hill where visionaries in computer science teach, and have graduated. Those who teach there include Prof. Frederick. P. Brooks, Prof. Stephen Pizer, Prof. Henry Fuchs, Prof. Turner Whitted, and Prof. Dinesh Manocha. My principal advisor, Prof. Henry Fuchs, is a wonderfully stimulating person to be with. Those who graduated frequent authors lists at the prestigious SIGGRAPH. Chapel Hill is where I switched form computer architecture to computer graphics and worked on volume visualization and augmented reality for medical applications. There is something new andinteresting happening at UNC-Chapel Hill in terms of computer graphics. So please check it out.

From January 1994 to March 1999, I worked for IBM Tokyo Research Laboratory. There, I worked in Advanced Graphics and CAD group. I enjoyed working there, with Masaki Aono, Hiroshi Masuda, Kazunori Miyata, Takayuki Ito, Kenji Shimada, Atushi Yamada, and others. I worked on virtual environment surgical simulation system for National Cancer Center in Tsukiji, Tokyo, realistic image synthesis by using quasi-random sequence, digital watermarking of 3D models, compression techniques for CAD models, and others. .

![]() Contact

Contact

Ryutarou Ohbuchi (Japanese -> 大渕 竜太郎)

Computer Science Department

University of Yamanashi

4-3-11 Takeda, Kofu-shi,

Yamanashi-ken, 400-8511

Japan

Phone: +81-55-220-8570

my_last_name AT yamanashi DOT ac DOT jp